Should educators be using Generative AI?

I didn't think the most provocative thing I'd do would be to create images using artificial intelligence

I’ve been described as provocative by a number of teachers, mainly because I try to argue that the education system would a better place without church control. I always find this allegation surprising because I don’t find this idea to be challenging. However, I can understand why many people in the education system find the questioning of religious control of schools to be dangerous and, sometimes, disrespectful. I never attempt to be rude about religion and I’m far from being anti-religious. I am, however, obsessed with the subject.

I read and listen to anything that covers the topic of religion in schools and I am interested in the reactions and responses to my own writing. What I’ve noticed is they almost all feel the need to say three things. Firstly, if they criticise the Catholic Church’s record in education, they must then follow-up with saying that they did much good. Secondly, they seem to always say that talking about religion in schools is highly emotive. Finally, if they do respond publicly at all to my writing, they tend to pick out an inaccuracy in the piece of writing rather than focusing on the message of the article. For example, I wrote a piece about ownership of schools where I said they are owned by religious orders rather than religious bodies. This was the only point that was focused on by a representative.

Since leaving X, I have migrated to Instagram to write posts. This means that I have to include an image. In order to do this, I have generated images using AI. I have been criticised recently for this, which made me pause. While I consider the pointing out of a small inaccuracy in an article I write is almost an admission that the full article has hit a raw nerve and therefore is generally accurate, being admonished for using AI to generate images is different. The commenter isn’t really concerned about what I’ve written at all. They are more concerned about using generative AI imagery, especially in the education space.

The word used most often was “slop”. Cheap, lazy, inauthentic. A shortcut that supposedly cheapens thinking and undermines professional credibility. In some ways, I could disregard it in much the same way people used to point out grammar mistakes on social media.

However, I felt, it is a criticism worth sitting with, because it raises a bigger question than whether an image was made in Canva, Midjourney, DALL·E, or by a graphic designer on Fiverr. It challenges me and perhaps all of us about what we think education work is, what parts of it matter most, and where we draw the line between craft and convenience. As I’m writing this article half the education influencers in the country have generated an AI caricature of themselves. Is this acceptable or does it fall foul of the Internet Police calling out slop?

Let’s name the fear first.

When people say “AI slop”, they are usually not talking about images alone. They are talking about the creeping sense that everything is becoming flatter, faster, and more generic. Originality is being replaced by prompts; thinking is being replaced; we are sleepwalking into a world where content exists without any care for the consequences.

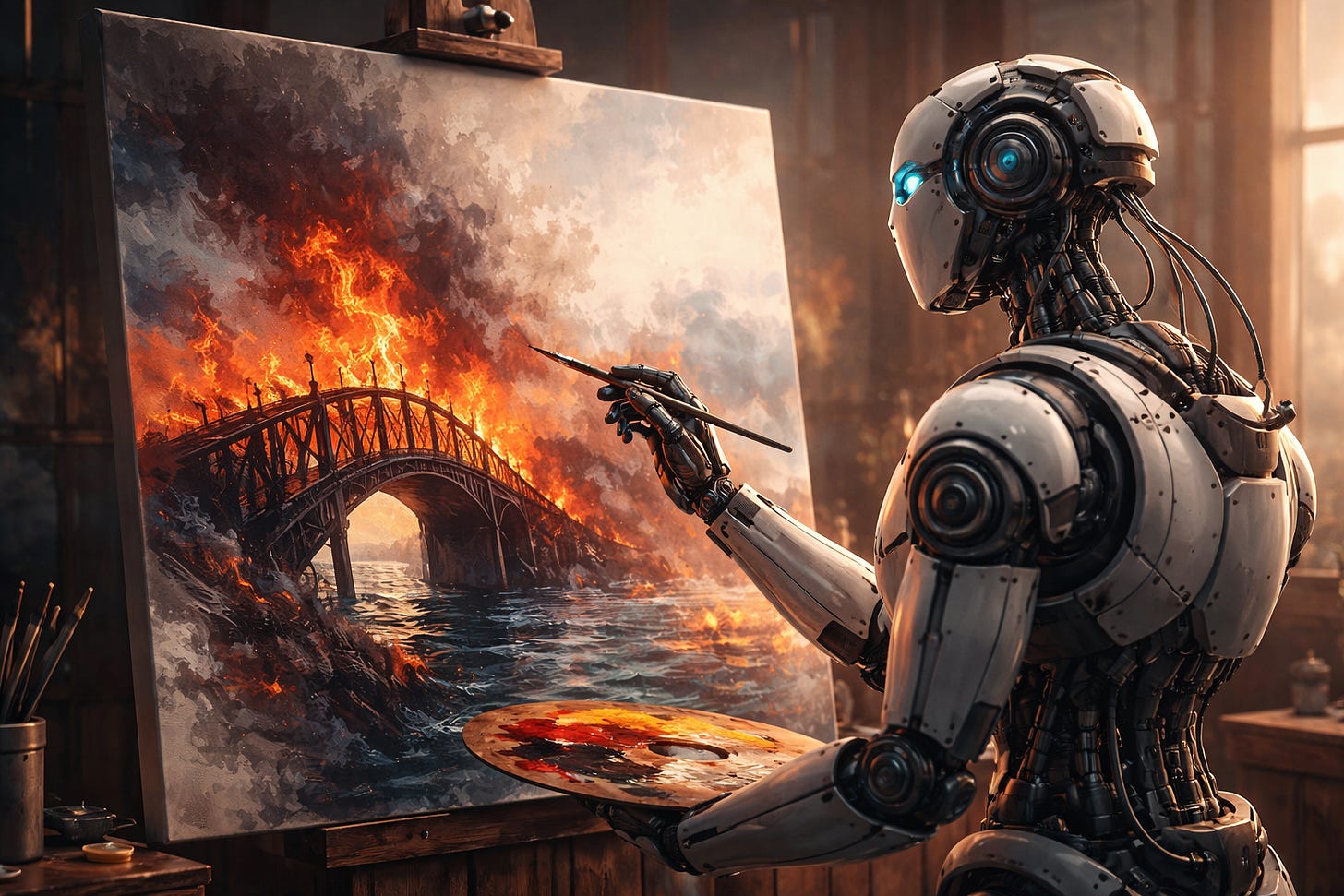

I think in many ways the fear is completely legitimate but in some ways, could we argue it’s misdirected. The problem in education has never been that teachers were creating fantastic graphics for their teaching or their outside pursuits. Take for example one of my admonished images - it was of a bridge falling apart and on fire. To me this is the symbol of Droichead. When I was writing about it between 2015 and 2018, I was reliant on free clipart so I found crappy public domain clipart of a bridge and added crappy fire graphics. It looked crappy.

People will claim, for a variety of reasons, that it was better having a crappy image than a sloppy piece of AI-generated art but we know the power of an image to generate interest.

No one asks whether the stock photos in newspapers or school websites are authentic representations. Every time there is an article in the Irish Times about education, they use the same stock graphic most of the time. Nobody complains. In teaching and in life we have always used shortcuts. Maybe we just liked them better when they were invisible?

There is a useful distinction that often gets lost in these conversations. Using AI is not the same thing as outsourcing thinking. If I ask a tool to generate an article about education and publish it untouched, that is a problem. Not because it is AI, but because it is bland. I don’t think my style of writing is anything to write home about but it’s my style of writing. AI has a style of writing and we instinctively know when something is written in ChatGPT. Maybe those giving out about graphics being generated by AI have a point in that regard.

However, when a generated image is published to illustrate the point of an article, does it make an argument weaker? Perhaps it’s my own bias, but the only purpose to my AI images is to try and grasp the attention of the would-be reader. Articles that are accompanied by images and videos tend to be seen more than articles without. I inadvertently found this out when I stupidly used an URL shortening service. I mistakenly thought it would automatically show the featured image of my article but it didn’t. The only article that showed was the one with the original featured image. Needless to say the number of people that read those without hte featured image was minimal compared to those that did.

I think there’s also something to be said about criticism of “AI slop” full stop. Most educators are choosing between good enough and not done at all. I don’t think they are choosing between artisanal design and soulless automation. They don’t have the ability to create the images they wish to display and something is better than nothing. Again, this might be my own bias.

Having said that, if someone asked me should educators be using AI slop, I would have to pause. Ultimately, the honest answer is that we probably should be using judgement. If there already exists an image or video that more or less does the same thing, then adding another AI image is probably not reasonable. If there is no purpose to the image or video, perhaps we should ask ourselves about that too? I’m not sure where the line is. These are questions, rather than answers.

We know that AI is replacing a lot of jobs that were considered safe. Some include computer coding and digital art. It’s easy for someone that isn’t employed in these areas to tell people to move with the times but these are real jobs and we need to be mindful of this. However, we have been here before when the Internet came along and people thought their jobs were at risk, teachers included. The thing is, we have to take this new reality, and see what we can do. For example, I can ask AI to write a simple app to do just about anything without understanding a line of code. However, if I’m doing anything beyond simple, I need a basic grasp of coding to make that happen. I feel the same way about art. Those with the skills should be able to harness this new technology to do really interesting things.

If calling something “AI slop” makes us stop and consider what we’re doing, and I can say that I have thought about it over the last week or two, that’s a positive thing. I hope my featured image shows that I have made an effort to think of an image that will pull a reader to this article. However, if calling out slop just becomes another way to police how educators survive an impossible workload, then it’s not a critique.

The more and more I think about AI, the more I find there is so much commonality with when the Internet became available in schools. I see this as the next evolution in technology and while we need to be mindful, I’m not convinced that it’s any different to before. I’m sure many of the same things were said about television when it came out. The difference, perhaps, is the pace of the change, and maybe that’s worth considering.